Introduction

The Halite 3 edition has ended and I managed to get rank 18. Here you will find my bot strategy and the tools I managed to create. I'll try to make this post-morem very detailed to avoid you the hassle of reading the code, that you can still find here.

If you didn't participate this edition, I recommend you to read the game rules first for this to make sense.

Disclaimer

All the code written for the competition was with fast prototyping in mind, so don't expect quality code. It must remain in the context of a programming competition.

My bot

I divided my bot into two separate parts, the task assignment and the navigation. The task assignment will set for each ship the target location and then the navigation will figure out how to get there safely.

Map

At the start of each turn I process the map to gather all the static information I could need of each cell, this is done in Map::Process and Map::CalculateNearInfo.I gather this information for each cell:

- Whats the min/max halite an enemy ship has that could move to this cell in the next turn

- Is the cell inspired this turn?

- For each k-th or less distance from the cell (with

kfrom 0 toMAX_CELL_NEAR_AREA_INFO):- halite sum

- number of cells

- average halite

- number of ally ships

- number of ally ships not going to drop

- number of enemy ships

- a

std::vector<std::pair<int, Ship*>>for ally and enemy ships containing <distance, Ship*> sorted by distance asc - some dropoff information

For example, with this information I could easily know the average halite in a radius k for any cell in the map.

Parameters

To load and separate the parameter for each game mode/map size I used different files in a cascade manner. I load the parameters in the following order:

- best_default.json

- best_

2/4p.json - best_

2/4p_32/40/48/56/64.json - JSON provided in the first argument or a path to a JSON file

If a parameter is found multiple times the last one found is kept. Ref

Friendliness

The friendliness is a value describing how "friendly" or "unfriendly" a cell is. This value is used for task assignment and navigation, its one of the core functions of my bot.

This is how it is calculated:

- the friendliness value initializes to zero

- for each distance

dfrom 0 toDISTANCES - 1(4):- the contribution for ships at distance

dwill beDISTANCES - d - for each ally ship at distance

d:friendliness += (1.0 - (ship.halite / constants::MAX_HALITE)) * (DISTANCES - d) - for each enemy ship at distance

d:friendliness -= (1.0 - (ship.halite / constants::MAX_HALITE)) * (DISTANCES - d) - for each ally dropoff at distance

d(dropoffs are like ships with 0 of halite):friendliness += DISTANCES - d

- the contribution for ships at distance

You can see that ships with more halite are less dangerous, because is less probable that someone attacks with a 950 halite ship.

You can find the function CalcFriendliness here.

Dropoff location

A hard problem that I think no one managed to solve it optimally. I went with a few heuristics and some restrictions found analyzing top bots.

My bot only allows one dropoff creation per turn, so each turn I choose where the next dropoff should be placed and let the ships go and build it when they need to drop.

Description of the function BestDropoffSpots:

- general restrictions:

- turn <= 0.75 *

constants::MAX_TURNS - number of ally ships >= number of dropoffs created *

dropoff_ships_needed(a parameter)

- turn <= 0.75 *

- consider each cell on the map:

- if the cell has more than 3000 halite then

score = INF + cell.halite; - if

distance_to_closest_dropoff>constants::MAX_WIDTH*dropoff_map_distance(another parameter)- the number of ally ships in a radius of 5 must be > 2

- the number of ally ships in a radius of 5 must be >= the number of enemy ships in a radius of 5

- (the average halite in a radius of 5) / (map average halite) >=

dropoff_avg_threshold(yet another parameter) - if all of the avobe conditions are met then

score = avgHalite / distance_to_closest_dropoff^2;

- if the cell has more than 3000 halite then

- the dropoff considered is the one with the higher score

Task assignment

The assignment of tasks is done like this:

Note: if a ship has been assigned then it can be used in the next category

- Dropoffs: get the BestDropoffSpots, if there is a dropoff to be build then the closest ship is assigned to build it. Also

constants::DROPOFF_COST - ship.halite - cell.haliteis reserved to pay for it - Drops:

- if a ship can't get to the nearest dropoff in

toroidal distance from ship to closest dropoff* 1.255 turns before the game ends then we enable theallow_dropoff_collisionflag (endgame flag) and override this ship as dropping - during the rest of the game, the ship will be assigned to drop if it was previously assigned to drop or its halite is >

DROP_THRESHOLD(970) - also, if a ship has < 300 halite then it exits the dropping state (if it hasn't been overrided)

- if a ship can't get to the nearest dropoff in

- Attacks (disabled on endgame):

- for each enemy ship:

- the closest ship to the enemy ship will be assigned to ram the turtle if the friendliness value of the position of the enemy ship is >

friendliness_should_attack(a parameter)

- the closest ship to the enemy ship will be assigned to ram the turtle if the friendliness value of the position of the enemy ship is >

- for each enemy ship:

- MiningThis is where it gets weird...:

- Score every ship/cell combination (I call a combination an edge):

- the cell has some restrictions:

- cell's halite >

min(35, map_average_halite * 0.8) - cell's friendliness value >

friendliness_mine_cell(a parameter)

- cell's halite >

- the score is calculated this way:

profit = cell.halite + (average_halite_in_radius_4 / map_average_halite) * 100; time_cost = distance_from_ship_to_cell * 4 + distance_from_cell_to_closest_dropoff * 0.8; if (cell.inspiration && constants::INSPIRATION_ENABLED) { profit *= 1 + constants::INSPIRED_BONUS_MULTIPLIER; } if (num_players == 2) { profit += min(0, num_ally_ships_in_radius_4) * 15; profit -= num_enemy_ships_in_radius_4 * 25; } else { // num_players == 4 profit -= num_ally_ships_in_radius_4 * 35; } score = profit / time_cost;

Some of the values hardcoded there were parameters but I changed it the last week to do some fine tuning

- the cell has some restrictions:

- Sort the edges: order by priority descending and if two edges are to close by ε=0.05 then tie-break with the lowest travel time

- Iterate all the edges in order, if the edge's ship and edge's cell hasn't been assigned yet then assign it

- Score every ship/cell combination (I call a combination an edge):

- Check for unassignment: sometimes ships don't get any taskThis can happen if any mining cell is adequate, so I force them to go to the nearest dropoff

Navigation

At this point, every ship has a target location assigned, so we must find a way to reach the targets fast and without traffic-jams or collisions.

Navigation Options

The first thing to compute the navigation is to generate the 5 NavigationOptions possible for each ship to end up with 5*number_of_ships options or less.

A NavigationOption describes a possible movement by a ship:

- optionCost: a number describing the cost of this movement

- position: the position where the ship would end up

- direction: the direction the ship must take to go to the position

- ship: the ship

To calculate each option cost I use this method:

- Run a min-path algorithm from the ship task target minimizing the following function:

The initial value of option cost is the value at the option's target position. The function will try to minimize the turns needed then tie-break using the toroidal_distance (this fixes some edge cases) and then with the cost of navigation.double ratio() const { return (turns * 1000.0) + toroidal_distance + haliteCost / 10000.0; }

Note: when running the path algorithm the ships with a distance < 1 from their targets are treated like ghost ships, they probably will stay still the next turns so thats taken into account. - Then, if the option's target position is free (that means there are no enemy or frozen shipsShips that can't afford to move so they have to stay still):

- if an enemy ship could reach here in the next turn, then I need to decide whether to dodge this possible enemy or not:

- dodge if the friendliness value is below the parameter

friendliness_dodge. This is the core collision avoidance strategy

if the ship should dodge then the option cost is modified:

So in the case of a tie the option with the most friendliness will have less costoptionCost += INF + (10000 - friendliness);

- dodge if the friendliness value is below the parameter

- if an enemy ship could reach here in the next turn, then I need to decide whether to dodge this possible enemy or not:

- else (there is a enemy ship in the destination, so we decide wheter to attack):

- two parameters are involved deciding if a ship should attack:

friendliness_can_attackandfriendliness_should_attack(withfriendliness_can_attack<friendliness_should_attack).

We discard this option if the friendliness value is less thanfriendliness_can_attack, if not then the option keeps the original option cost value (the path ratio) and if the ship wants to go that direction then it can and maybe will crash.

Also, if the friendliness value is higher thanfriendliness_should_attackthen we are in a position where crashing is a very good idea and the option cost is overrided:

Here tie-break with the ship's halite so is better to collide with a ship with less halite.optionCost = 1 + (ship->halite / constants::MAX_HALITE);

- two parameters are involved deciding if a ship should attack:

Now we have all the possible options scored we can assign them to the ships. During the competition I used two ways:

The easy way

This is a very simple way to do it and I'm sure many people tried this or something similar.

While there are no ships not assigned to an option:

- Pick the ship with the highest priority (the task priority value) not assigned yet

- Sort the options by lowest cost, pick the first that is available (that means, no other ship has already picked that option's target position)

- Mark the picked option's target location as unavailable

- If where we are moving there is a ship, compute this ship next to avoid possible self collisions

This way ensures that there will be no self collisions and ships are choosing their options according to their priority.

Navigation simulation

Then I tried simulating the navigation as a whole, each ship picking an option and minimizing the sum of all the options picked by randomly swapping options. You can see the messy code.

Here is a high-level overview of how it works:

- While we have time to continue (ms till timeout > 50):

- Copy the best simulation into a new object

- Pick a random

NavigationOptionand select it:- check if the picked option's target location is already being used by another option, if it is then deselect the option and keep a reference to the

dangling_ship, the ship that had the option being deselected. - select the option (update the data structures)

- if we have a

dangling_shipthen we solve conflicts:- select the lowest cost option available for the

dangling_ship - if none is available, then randomly select an option for this ship and repeat

- select the lowest cost option available for the

- check if the picked option's target location is already being used by another option, if it is then deselect the option and keep a reference to the

- Compute the total cost of the new simulation (sum all the picked option costs)

- If the total cost is lower than the best simulation's cost, replace it

I end up using this method, though is not worth that much μ (IIRC ~1.5) as I expected. For local testing I lower down the simulation time to be able to run games in a minute.

Ship spawining

My spawning strategy is mainly based on some ifs with magic numbers. This is how ShouldSpawnShip decides:

bool Strategy::ShouldSpawnShip()

{

...

// HARD MAX TURNS

if (game->turn >= 0.8 * constants::MAX_TURNS)

return false;

// HARD MIN TURNS

if (game->turn < 0.2 * constants::MAX_TURNS)

return true;

// HARD MAX COLLECTED

if (game->map->halite_remaining / (double)(constants::MAP_WIDTH * constants::MAP_HEIGHT) < 60)

return false;

// HARD MAX SHIPS

if (my_ships > 20 && my_ships > enemy_ships * 2)

return false;

double max = 0.65;

switch (game->num_players) {

case 2:

switch (game->map->width) {

case 32: max = 0.60; break;

case 40: max = 0.62; break;

case 48: max = 0.65; break;

case 56: max = 0.68; break;

case 64: max = 0.70; break;

}

break;

case 4:

switch (game->map->width) {

case 32: max = 0.36; break;

case 40: max = 0.40; break;

case 48: max = 0.45; break;

case 56: max = 0.52; break;

case 64: max = 0.55; break;

}

break;

}

// last minute change that seemed to perform better

max *= game->num_players == 4 ? 0.85 : 1.15;

return game->turn <= max * constants::MAX_TURNS;

}Those big switchs are the core of the function, they decide when to stop spawning ships. The magic number were extracted analyzing games from Rachol, teccles and TheDuck314 using the tool described in the Games analyzer section (with some fine tuning by hand).

Having those numbers hardcoded didn't seem like a good spawning strategy and I think I could have performed better.

Parameter optimization

I used two techniques to find the optimal values for the parameters of my bot. I managed to optimize some parameters better using one technique or the other and I end up with a mix of both.

Both techniques use the workers to distribute the matches described on the tools section.

Genetic Algorithms

I used my own script using NodeJS without a GA lib, you can find evolve.js here.

In all my testing, I used less than 50 of population, because playing games is really expensive. Usually not optimizing more than 4-5 parameters at a time. It reaches a point where the population has very low diversity so I just regenerate all the individuals every 300 generations or so and always keep the best.

This is my first time using GAs for something useful. How my GA algorithm works:

- Generate a population if this is the first generation (or we have more than 300 generations) using random values

- Calculate the fitness of each individual:

- Play 10 games (each individual with the same set of seeds)

- Compute the fitness like this:

for(var r of results) { fitness += (r.collected) + // collected=halite_collected / map_initial_halite (r.rank == 1 ? 10 : 0); } - Save the individual if this is the highest fitness ever

- Sort the population by fitness descending

- Crossover to generate the new 50% of the population:

- Pick from the first 30% two individuals to be crossed

- The new individual will pick every parameter with a 50% chance of being from any of the two parents

- Mutate this new individuals (like explained below)

- Add the other 50% of the population mutating the bests of the old population:

for(var parameter of parameters) {

new_value = old_value;

var R = Math.random();

if(R < 0.15) {

new_value = parameter_min + Math.random() * (parameter_max - parameter_min);

} else if(R < 0.45) {

new_value += (Math.random() > 0.5 ? -1 : 1) * (parameter_max - parameter_min) * Math.random() * 0.15;

}

new_value = clamp(new_value, parameter_min, parameter_max);

}abs(A[parameter] - B[parameter]) > 0.01 * (parameter_max - parameter_min)). Mutate duplicated individuals until there are no moreI don't know if the diversity problem was caused by using a small population or I messed up (more probable) with some of the % used in the algorithm. Either way, I managed to get good results from it.

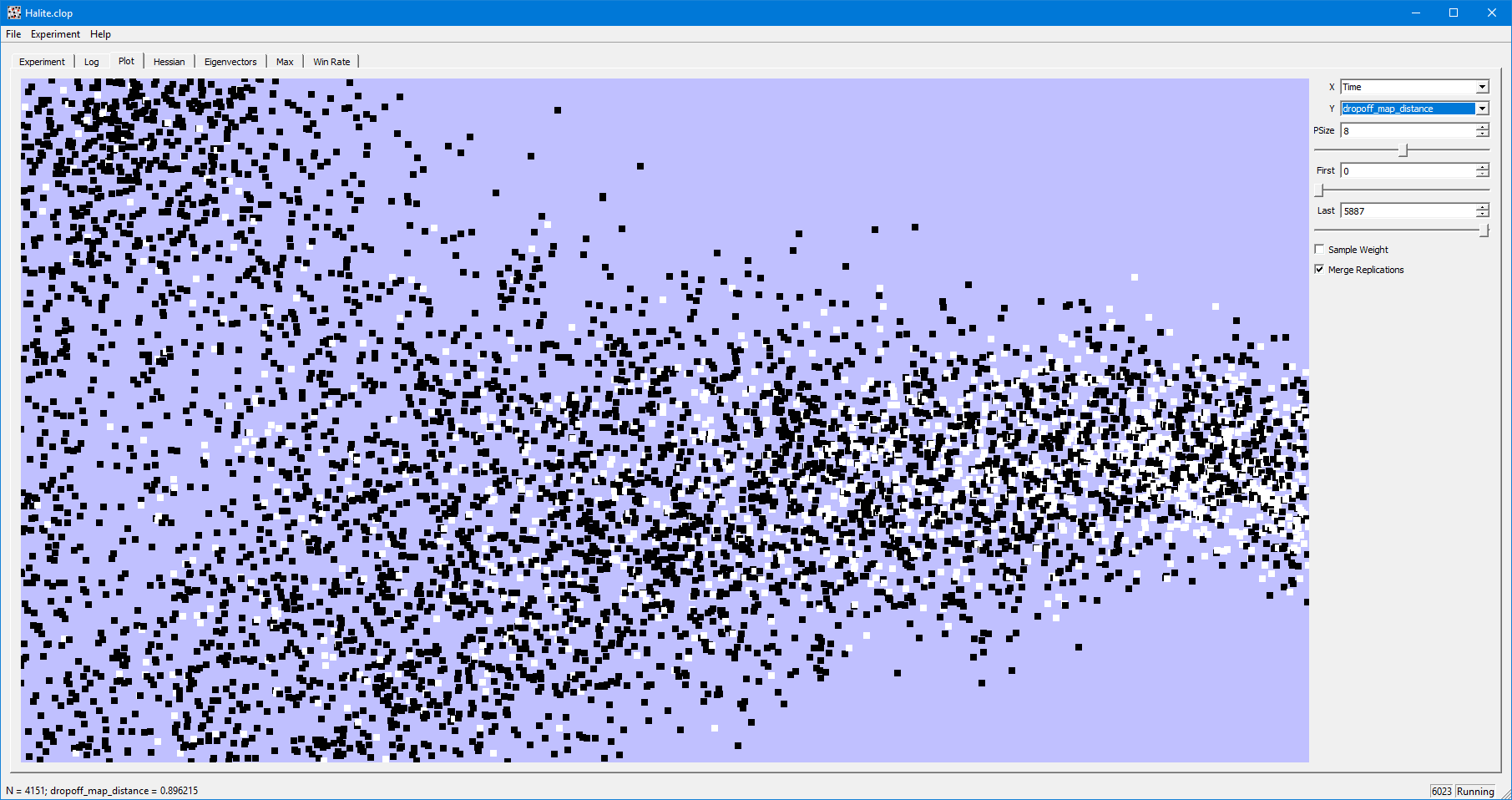

CLOP

I found this tool because @janzert talk about it on the Discord server. CLOP stands for Confidence Local OPtimization. I already had the distributed games system working fine so I give it a shot.

This is Halite.clop, the config file I used:

Script node ../halite3-bot/Worker/play_game.js

Name Halite

DrawElo 0

H 3

Correlations all

Processor any

Processor any

Processor any

# Many "Processor any" as the concurrency available in the queue

IntegerParameter a 0 10

LinearParameter b -1 1From my tests, is better to optimize no more than 7-8 parameters at a time with this program.

Tools

Distributed matches

Distributed matches

I needed run lots of matches concurrently on different machines to optimize parameters, so I used a NodeJS with Bee-Queue and a Redis server to distribute the work.

A brief description of each of the scripts inside the Worker folder:

worker.jsConnect to the queue and wait for jobs to arrive. A job is a JSON object and must have this structure:{ seed: 1548609917, size: 64, bots: [{ bot: "v85", arguments: "" }, { bot: "v106", arguments: "{\"parameter\": 42}" }], replay: false }When the match ends, the worker will send the result to the queue like this:

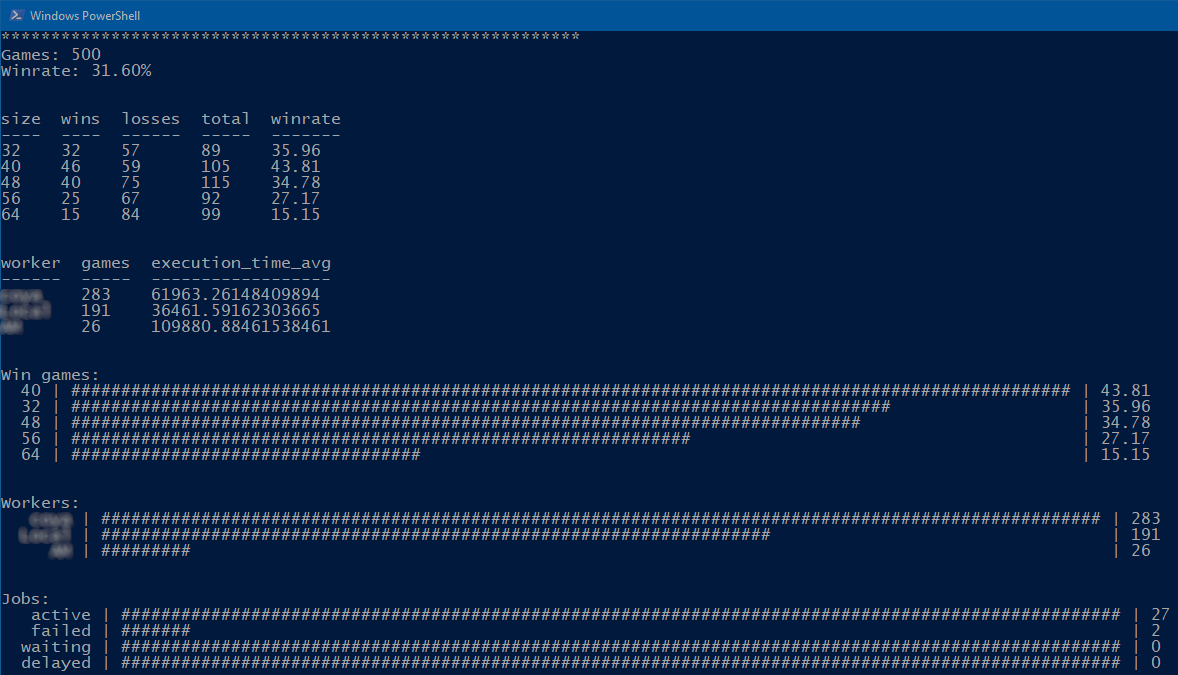

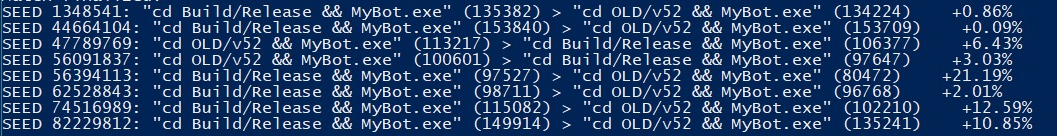

{ worker: "My Worker", crash: false, execution_time: 42069, map: { seed: 1548609917, width: 64, height: 64, total_halite: 823132 } players: [{ index: 0, bot: "v85", rank: 2, score: 176235, collected: 0.2141, terminated: false }, { index: 1, bot: "v106", rank: 1, score: 247774, collected: 0.30101, terminated: false }] }status.jsConnects to the queue and subscribes to job finished events to present a report of the last 500 games:evaluation.jsGeneratesNUM_GAMESgames withNUM_PLAYERSplayers in them with the seedSEEDand prints the results. How I use it:#node evaluation SEED NUM_PLAYERS NUM_GAMES node evaluation 42 2 10By default it uses the bot

../../Build/Releasefor testing within the same machineplay_game.jsPlays a game and outputsWorLif the bot namedLatestwon the match. The other bots are randomly picked from a pool using the seed provided in the first argument.

Used for CLOP.

This is how I setup everyting:

- Prepare the Worker folder with the bots that will be used

The format must to be /bots/BOT_NAME/MyBot.exe - Install and configure a Redis server. Set a password if you are going to use it over the internet (which was my case, a friend let me use some cores of his computer)

- Install all the dependencies using npm

- Modify

config.jsonwith the appropiate values for each of the computers, like# of concurrent games, the IP of the Redis server and password - Execute

run_forever.batthat will run and restart the scriptworker.jsin case of a crash

This is how it looks when games are being played - Optionally execute

status.jsto be informed about the games being played (last 500)

Games analyzer

I did an script (analyzer.js) to analyse a batch of games to find patterns in players. I managed to get some dropoff and spawning information with it.

Some examples

This is an analysis of winning games from teccles in 2P. I tracked how many ships he had when he spawns a new ship. In this case, we can see that he never spawns a ship when he have 5 more ships than the enemy. In 4P the threshold is 10. I have to say that this is not the final version of teccles and I'm sure that he changed this. I tried this strategy and it didn't work for me.

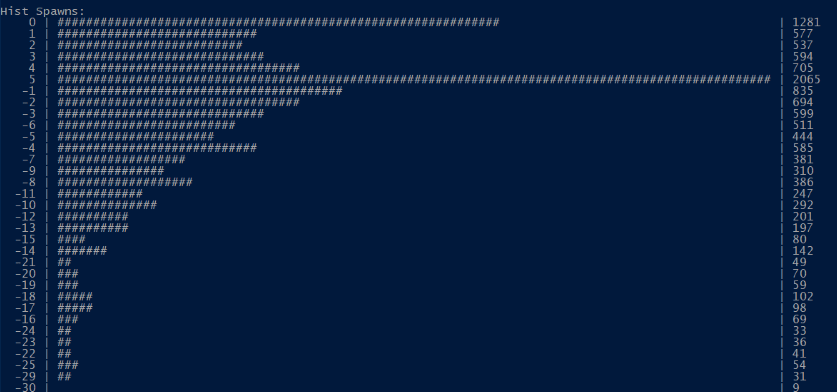

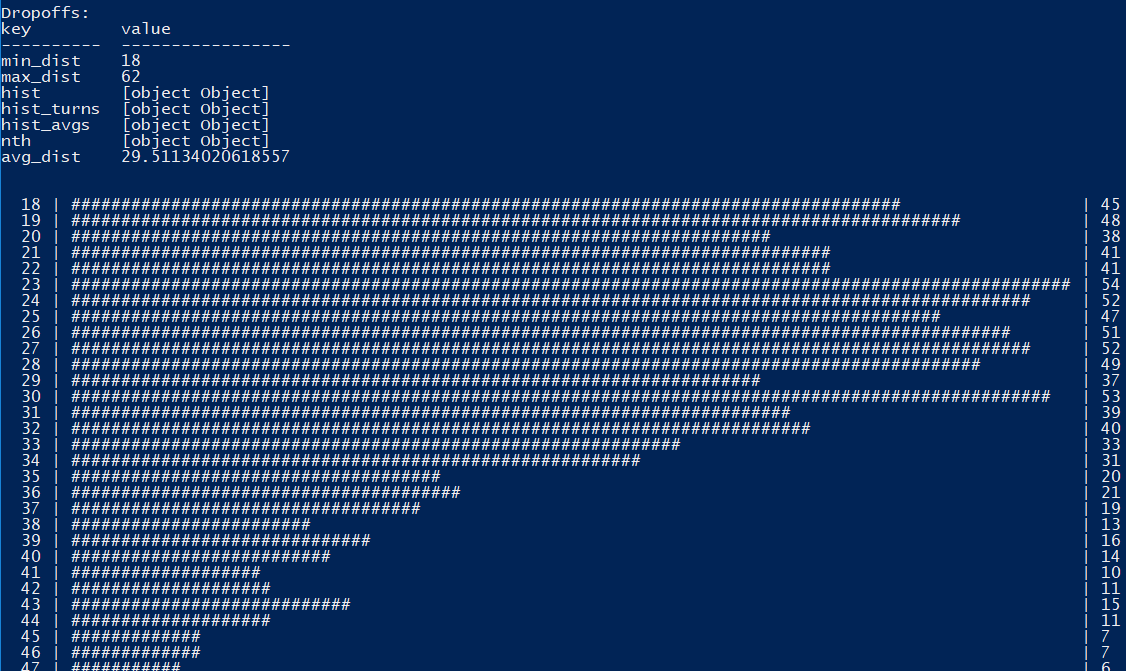

This is an an analysis for Rachol v85 in 2P when he was rank 2. The histogram below shows the distance between a new dropoff and the closest one. You can see he only create dropoffs being 18 or more units away. Also we can see (not in the image) the minimum number of ships and turn for every n-th dropoff. You can find the full log here.

With a similar script (which I lost) I computed the last turn a player spawned a ship in every 2P/4P/map size combinations. Averaging that infomation from top players and some tuning by hand I just hardcoded the max turn to spawn a ship in my bot. For example, this was the output for TheDuck314 (when he was rank 1):

{

"2p_64x64": {

"sum": 4432.203758995905,

"count": 63,

"min": 53.18725099601593,

"max": 82.00455580865604,

"avg": 70.35244061898263

},

"2p_40x40": {

"sum": 3591.3348946135834,

"count": 56,

"min": 51.288056206088996,

"max": 66.7447306791569,

"avg": 64.13098026095685

},

"4p_64x64":{"sum":5366.533864541831,"count":91,"min":50.59760956175299,"max":59.76095617529881,"avg":58.972899610349785},"4p_40x40":{"sum":4338.407494145199,"count":101,"min":37.23653395784544,"max":52.69320843091335,"avg":42.95452964500197},"4p_56x56":{"sum":5188.88888888889,"count":96,"min":44.44444444444444,"max":57.65199161425576,"avg":54.05092592592593},"2p_32x32":{"sum":4640.547263681595,"count":81,"min":1.2437810945273633,"max":64.6766169154229,"avg":57.290706959032036},"2p_56x56":{"sum":4573.539000002854,"count":66,"min":54.088050314465406,"max":76.37614678899082,"avg":69.2960454545887},"4p_48x48":{"sum":4780.530973451327,"count":96,"min":39.823008849557525,"max":55.309734513274336,"avg":49.797197640117986},"2p_48x48":{"sum":5838.590099940728,"count":88,"min":52.43362831858407,"max":70.66974595842956,"avg":66.34761477205372},"4p_32x32":{"sum":2400.0000000000005,"count":68,"min":28.855721393034827,"max":49.004975124378106,"avg":35.29411764705883}

}

Combat database

To analyze other bots

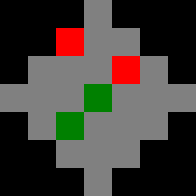

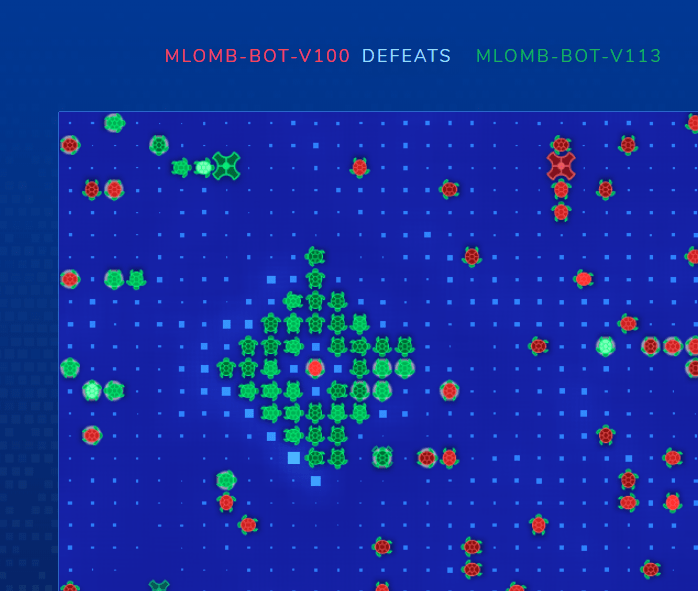

In my try to decipher teccles collision avoidance/combat, I created gen_db.js which reads a bunch of games and generates for each ship movement a key based on the enviroment before moving like this: xxx*xxxxx**-xxx*****x***+***x*+***xxx***xxxxx*xxx. Messy huh? Lets show it in a different format:

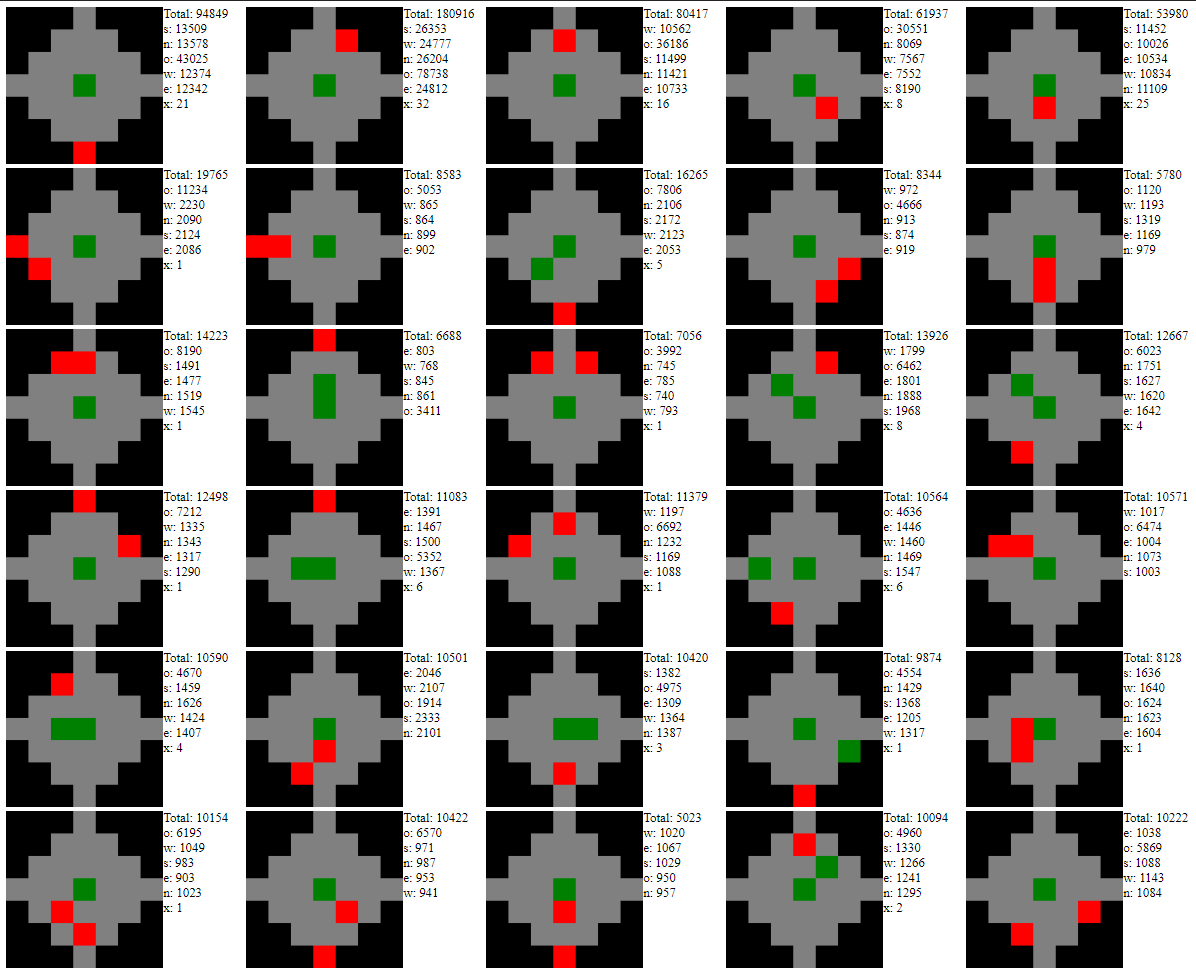

As you can see, green squares represent ally turtles and the red ones enemy turtles. Each key is mirrored and flipped to account for rotations. Now, for each of those keys I save the direction chosen by every ship in that situation. And get something like this:

Total: 312

o: 196

s: 33

w: 38

n: 24

e: 21So, for teccles ships in that situation, is very probable that the ship in the middle stay still (what the o means).

Some more examples (displayed with db.html) for lots of teccles games:

If you are interested in the full raw data you can find it in counts_db.json (~238MB).

Last minute bug detected: when rendering db.html, accounting for mirrored-/flipped didn't update the cardinal. If you are interested you can view this image without mirroring/flipping here.

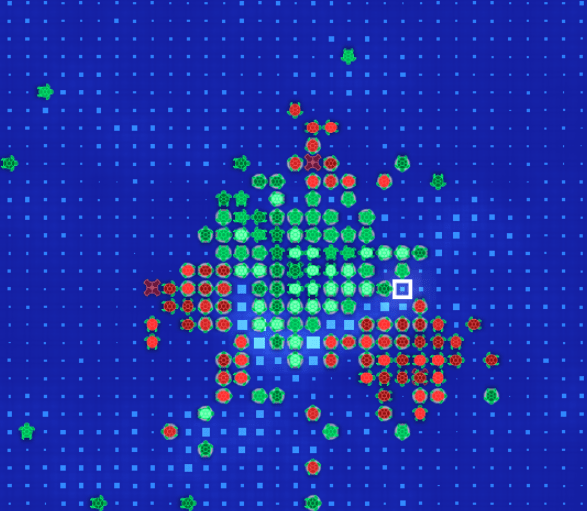

To analyze my bots

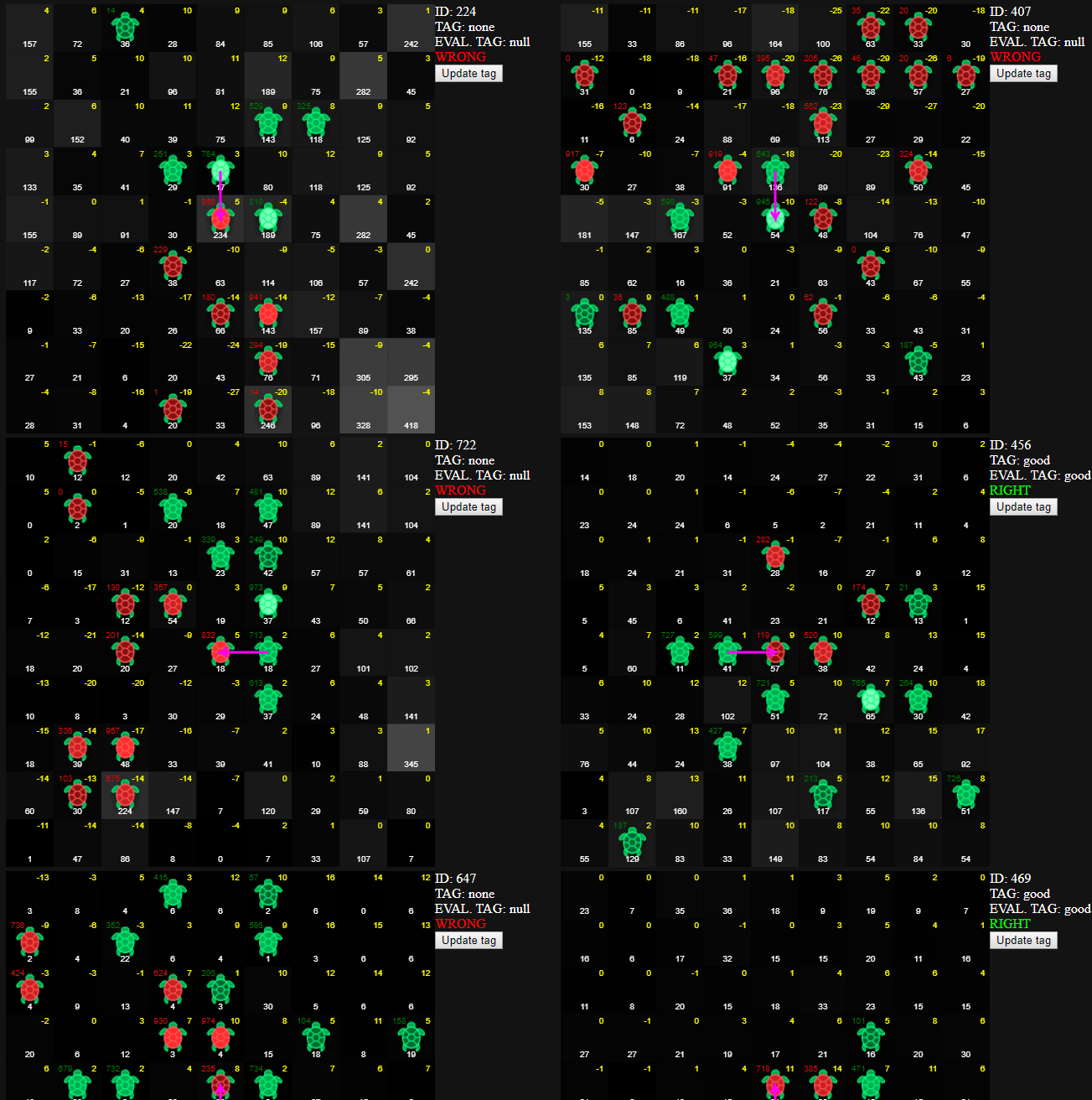

I made a little app to hot reload debug my ShouldDodge(Ship, Destination) function, which unfortunately didn't make it to the final bot but it was cool to showcase it anyway.

First I generated lots entries playing games locally, each entry containing all the map information for a single ship movement so I could simulate being in the real bot and test live the algorithm. An example of an entry (it's a JSON blob).

Each entry can be tagged manually with a string, for example 'good' or 'bad' and then in the editor you provide a function that takes the entry data returns the possible tag and compare both tags in all the tagged entries and gives some %s.

The tagger

The yellow numbers are the friendliness values

The code editor

Replay viewer

Like last year, fohristiwhirl made an excellent game replayer called Fluorine. But I needed to manipulate the map data that I had already programmed in halite.js so I just went a did a fast replayer to display and alter variables in realtime.

Statistics & Analysis Page

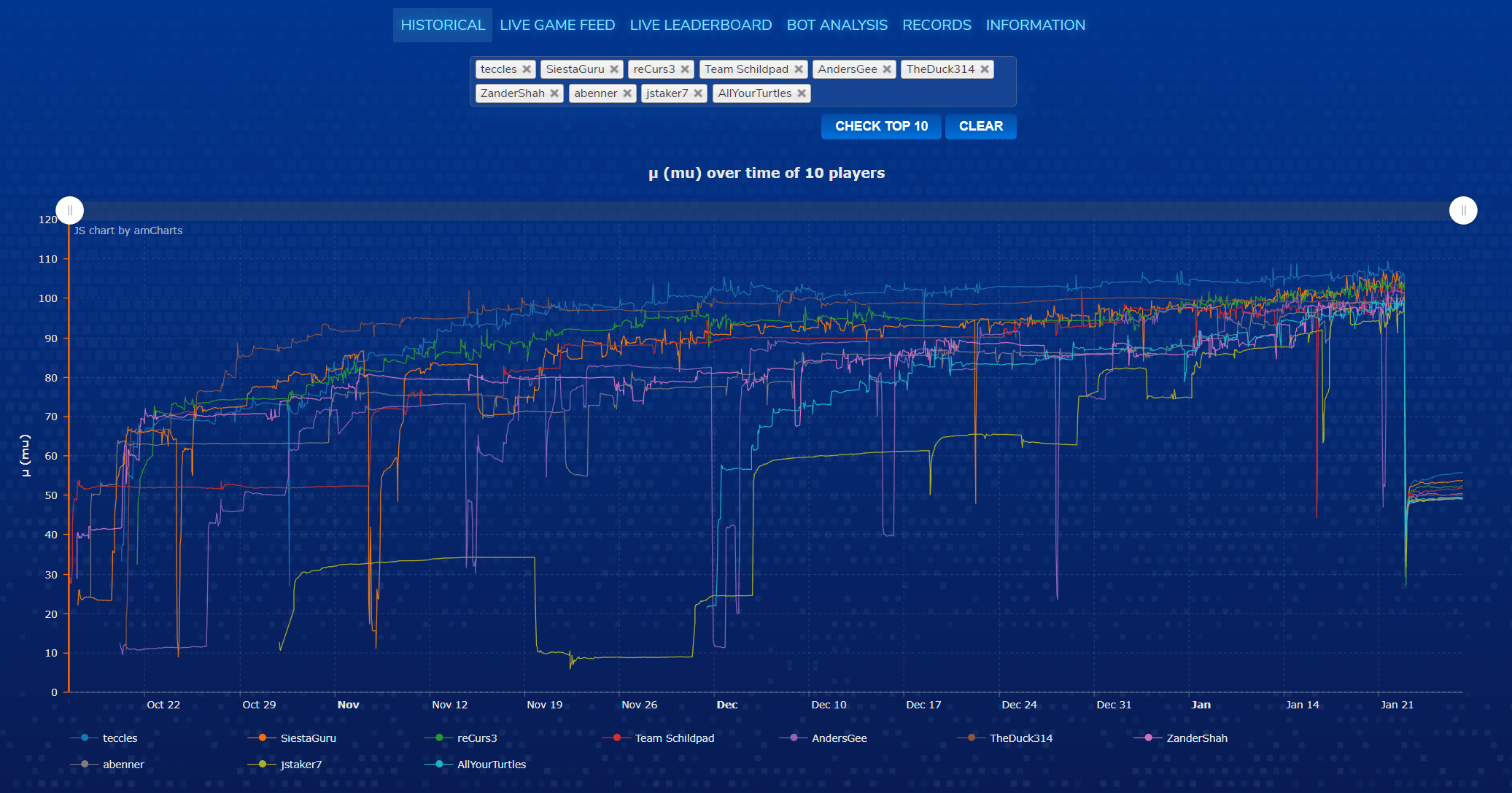

This is the most notable tool I did this edition, you can find the page here. Last year I did something similar but it was late in the competition and not very useful (only a μ graph and winrate percentages, also being ugly). This year, from the first day of the open competition (October 16th, 2018) I started to crawl the games into a MySQL database. With the database slowly growing I started from what I have last year, the historical graph showing the μ evolution overtime time. After that, the analysis page which later become the most important part of the page, showing the winrates in 2P/4P and combined.Some time later I did the live game feed where you can filter by the number of players, the map size and even select players to filter the games, and the games appear being played.

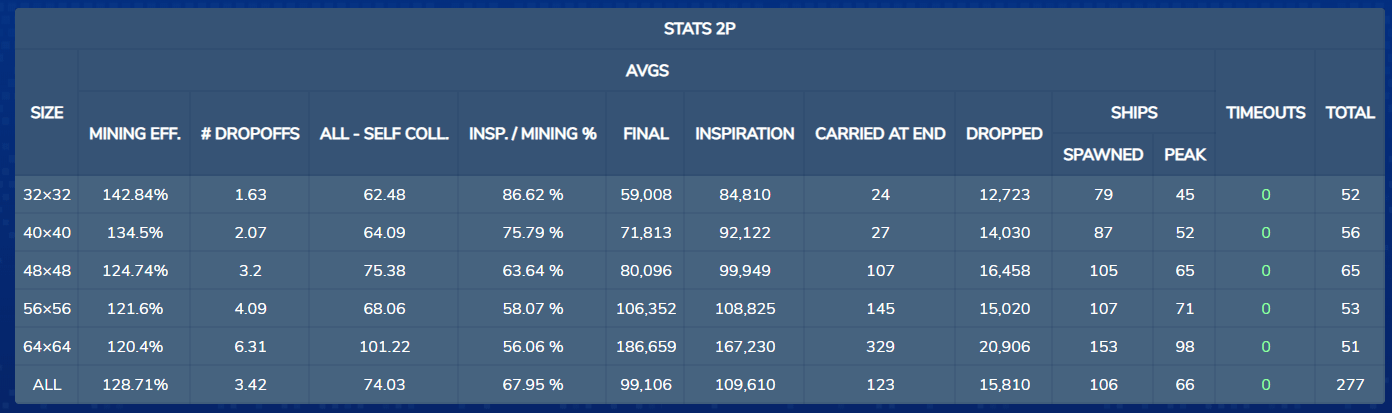

Before mid season I made a PR adding to the game engine some metrics we discussed in the Discord server to add to the analysis. The metrics added were:

last_turn_ship_spawn: The last turn the player spawned a shiptotal_dropped: Total amount of halite lost due collisionscarried_at_end: Amount of halite on ships last framedropoff_collisions: The number of ships involved in collisions with any ships, allied or not over a dropoffships_spawned: The number of ships spawnedships_peak: The maximum number of ship spawned at the same timemap_total_halite: Total halite available at the startexecution_time: Total execution time of this match in ms

Not all of them finally made it into the page, but they were nice to have.

Historical graph

The graph is calculated using the average μ in the matches every hour.

There are a couple of things here I couldn't implement, someone suggested to show the σ (sigma) with like an area graph avobe and below the line, extending it 3*(average σ). The graph with lots of players would look too crowded and be a mess. Also someone else suggested a way to differentiate between versions in the same line, but I had the same problems of the last idea. Maybe I will consider this ideas again in the next edition.

Live game feed

The live game feed lets you filter by the number of players, the map size and even select players to filter the games, and they appear as being played. I mainly used it to find specific matchups where I lose.

Live leaderboard

Here you select the player you want to track and every 30 seconds (or the time selected) it will show you the difference in μ and σ with the last update of you and the players close to you.

Also, suggested by @Lunariz I added the option to rank by μ, so you can check your rank ignoring the σ that you may have because a low game count due a recent submission.

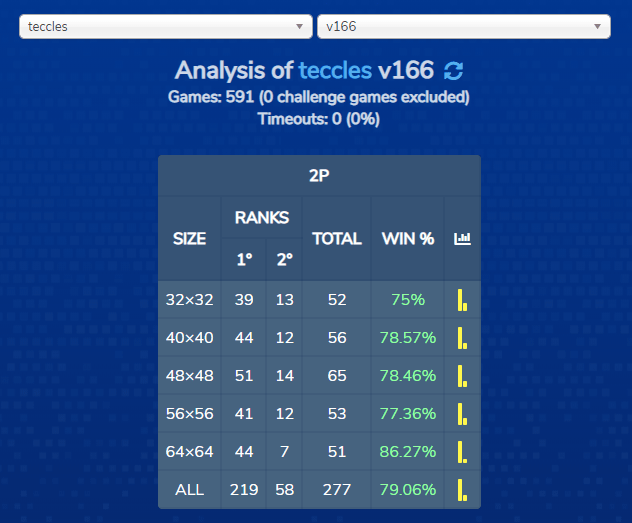

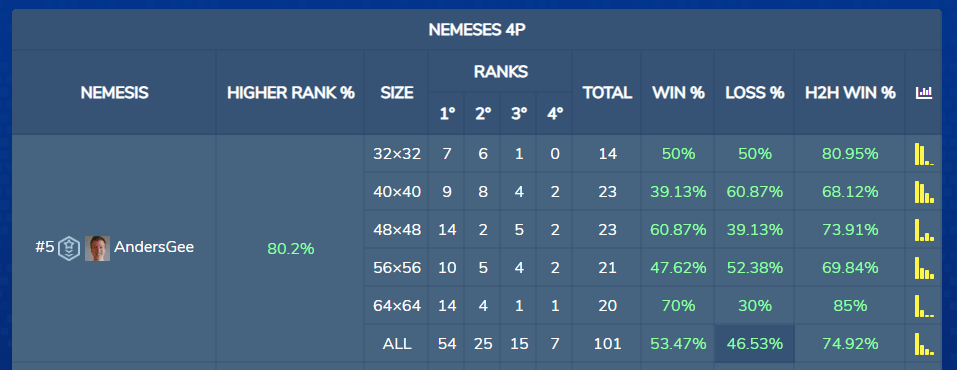

Bot Analysis

By far, the most important piece of the page. You can select any player/version and get a detailed breakdown of some aspects of that bot.

Features displayed per game mode (2P/4P) and map size:

- Winrate/places separated and combined

- Nemeses breakdown, you can see winrates/places against specific players

- Head to head percentages in 4P suggested by @teccles. Answers the question

What percentage of games against X did I finish higher in? - The stats table, which was build purely of suggestions:

- AVG(

mining_efficiency) * 100: Average of mining efficiency - AVG(

number_dropoffs): Average number of dropoffs - AVG(

all_collisions-self_collisions): Average of non self collisions - AVG(

total_bonus/total_mined) * 100: Average of total_bonus / total_mined as % - AVG(

final_production): Average of halite at the end of the game - AVG(

inspiration): Average of halite collected from inspiration bonuses - AVG(

carried_at_end): Average of halite in ships at the end of the game - AVG(

total_dropped): Average of halite lost due collisions - AVG(

ships_spawned): Average of ships spawned - AVG(

ships_peak): Average of ships alive at the same time timeouts: Number of timeouts

- AVG(

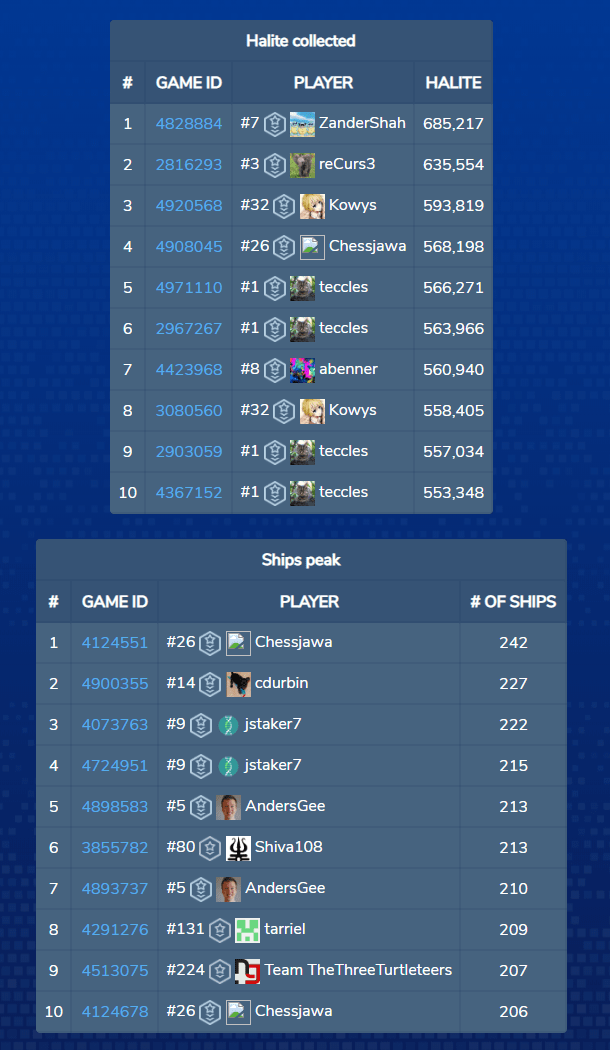

Records

This was a not that useful for analyzing bots, but it was a funny thing to have.

Records being displayed:

- max/min μ ever reached

- max halite collected in a single game

- max number of ships alive at the same time in a single game

- max number of non self collisions of a player in a single game

- extreme high/low halite maps, the maps with the most/least halite. Original idea by fohristiwhirl here

- extreme high/low density maps, the maps with the highest/lowest halite density. Suggested by @AlanTuring via PM

- highest μ on v1. Implemented when @blasterpoard suddenly appeared in top 20 with his first version. Link

Wrap up

I want to thanks TwoSigma & @janzert for mantaining the competition alive. I'll wait for more Halite edition to come.

The community on the Discord server was amazing and I couldn't have built the stats page without the suggestions from all of you.

You can find other source code and post-mortems here.

Some funny situations to wrap up